OBS Studio Under the Hood: How OBS Studio Works for Pro Live Streaming

OBS Studio looks simple on the surface—add sources, build scenes, hit Start Streaming—but under the hood it’s a real-time media engine that has to keep video compositing, audio mixing, and encoding + network delivery stable for hours. If you’re a radio DJ, music streamer, podcaster, church broadcaster, school station engineer, or live event producer, understanding OBS internals makes your streams more reliable, lower-latency, and easier to troubleshoot.

This module explains OBS’s architecture (render loop, audio thread, outputs), how capture sources feed the compositor, how audio stays in sync, and how encoders and protocols (RTMP/SRT) ship the stream. We’ll end with practical broadcast workflows that pair OBS with Shoutcast hosting and AutoDJ for true 24/7 uptime—while highlighting why Shoutcast Net’s flat-rate unlimited model beats Wowza’s expensive per-hour/per-viewer billing and legacy Shoutcast limitations.

Planned Table of Contents

- 1) OBS core architecture: render loop, audio thread, outputs

- 2) Sources & capture: game/window/display, cameras, media, NDI

- 3) Scene compositing: filters, scaling, color formats, GPU path

- 4) Audio engine: sample rates, resampling, sync, monitoring, VSTs

- 5) Encoding pipeline: x264 vs NVENC/AMF/QSV, CBR/VBR, keyframes

- 6) Protocols & delivery: RTMP/SRT, reconnect logic, latency tradeoffs

- 7) Broadcast workflows: OBS + Shoutcast Net, AutoDJ backup, 24/7 uptime

Why this matters

- Keep streams stable for long shows and services

- Predict CPU/GPU usage and avoid dropped frames

- Fix A/V sync without guesswork

- Choose the right protocol for latency and reliability

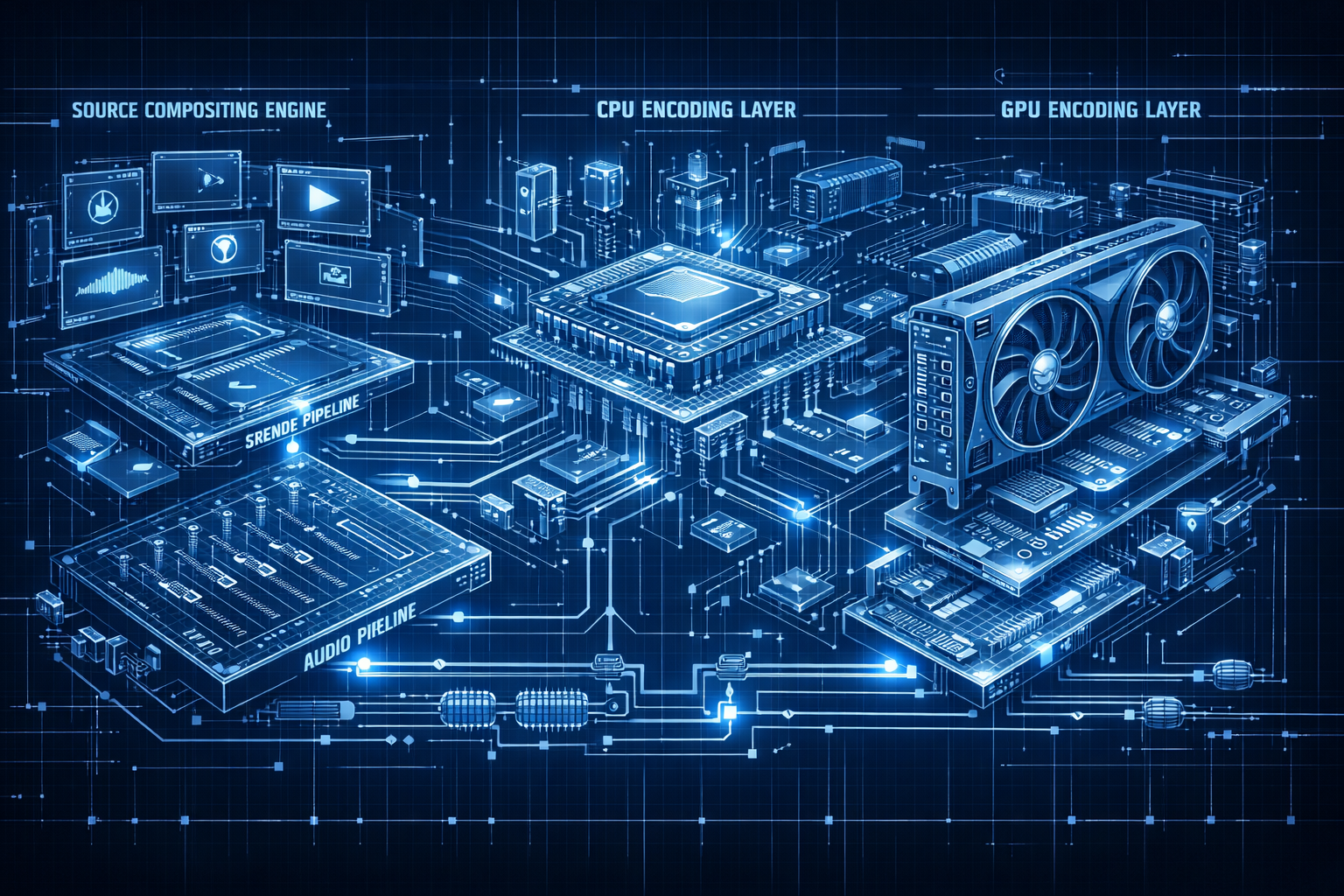

OBS core architecture: render loop, audio thread, outputs

OBS is essentially a set of real-time pipelines that run in parallel:

- Video render/composite loop (GPU-driven): draws your scenes every frame.

- Audio graph (CPU-driven): captures, resamples, mixes, applies filters, and timestamps audio.

- Outputs: streaming (RTMP/SRT), recording (MKV/MP4), virtual camera, replay buffer, etc.

The render loop: frames are the “clock” for video

Your OBS FPS setting (e.g., 30 or 60) defines how often OBS tries to produce a composited frame. Each frame is rendered by the GPU using the active scene: sources are sampled, transformed, filtered, blended, and then the final frame is delivered to the encoder. If the GPU can’t keep up, you’ll see render lag (missed render frames). If the encoder can’t keep up, you’ll see encoding lag (missed output frames).

The audio thread: continuous time, not “per-frame”

Audio is processed in blocks (buffers) based on sample rate (typically 48 kHz) and the audio subsystem. OBS timestamps audio continuously and then aligns it to video for output. That alignment step is why audio settings (sample rate, monitoring device, and per-source sync offsets) matter so much for podcasts, live DJ sets, and worship services.

Outputs: one mix, many destinations

OBS can produce multiple outputs, but each output is a real workload: it may require a separate encoder session, disk I/O (recording), or network I/O (streaming). Advanced production setups often run one primary stream output plus a local recording in MKV for safety.

+------------------+

Sources --->| Scene Graph |----> GPU Composite Frame (RGBA/NV12)

(Audio/Video| (Transforms + |----> Filters (GPU/CPU)

Capture) | Filters) |

+---------+--------+

|

v

+------+------+

| Encoders |----> Stream Output (RTMP/SRT)

| (x264/NVENC |----> File Output (MKV/MP4)

| AMF/QSV) |----> Virtual Cam / Preview

+------+------+

^

|

+------+------+

| Audio Graph |

| Mix + VST |

+-------------+Pro Tip

When troubleshooting “stutter,” always classify the failure first: render lag (GPU), encoding lag (CPU/GPU encoder), or network dropped frames (bandwidth/RTMP stability). OBS’s Stats window is your first diagnostic tool.

For broadcasters building a station-grade pipeline, this separation matters: you can optimize your source composition and GPU path, then choose an encoder, then choose the best delivery method—without guessing.

Sources & capture: game/window/display, cameras, media, NDI

In OBS, a Source is any producer of video frames and/or audio samples. Each source has its own capture method, timing behavior, color format, and CPU/GPU cost. Your overall stability depends heavily on choosing the right capture type for the job.

Display Capture vs Window Capture vs Game Capture

Display Capture grabs the entire screen. It’s universal but can be heavier and can accidentally capture private windows. Window Capture targets a specific application window. Game Capture hooks into a game’s rendering pipeline (often the most efficient and smooth for games, but can conflict with anti-cheat or certain render APIs).

| Capture Type | Best For | Pros | Cons |

|---|---|---|---|

| Display Capture | Slides, desktop demos, multi-app workflows | Works with anything | Higher overhead; privacy risk; can capture notifications |

| Window Capture | Browser-based radio automation, DAW, chat window | More controlled; less clutter | Some apps redraw strangely; may capture black window on some GPUs |

| Game Capture | Games, some full-screen renderers | Low-latency; smooth frame pacing | Can fail with certain APIs/anti-cheat; setup varies |

Cameras and capture cards: UVC, HDMI ingest, and sync

USB webcams typically appear as UVC devices. HDMI cameras usually require a capture card which exposes a video device plus an optional audio device. A common pro workflow is to capture the camera video and embed audio separately via an interface—then use OBS audio sync offsets if needed.

Media sources: files, playlists, and live reliability

Media Sources can decode local files, but decoding costs CPU/GPU resources and can introduce timing issues if the file’s timebase is odd. For 24/7 channels, prefer broadcast-safe formats (constant frame rate video, AAC audio, sane keyframe intervals) and test for loop behavior.

NDI and IP contribution

NDI sources bring video/audio over LAN from another PC (e.g., a graphics machine or a DAW workstation). This is powerful for school stations and churches that want a “control room” topology, but it adds network dependency and often uses higher bitrates internally. Keep NDI on wired Ethernet and consider a dedicated switch for busy venues.

Pro Tip

If you’re streaming long-form shows (DJ sets, sermons, sports), pick capture methods that minimize complexity: avoid stacking multiple browser captures, limit live scaling, and prefer stable device paths. Fewer moving parts means fewer mid-show surprises.

At the infrastructure level, OBS is the capture + production stage; Shoutcast Net is the delivery stage—designed to stream from any device to any device and scale to unlimited listeners with a flat-rate model.

Scene compositing: filters, scaling, color formats, GPU path

Every scene in OBS is a 2D (and sometimes 3D-like) composition: sources have transforms (position, scale, crop, rotation), blending modes, and filters. OBS tries to keep this work on the GPU because the GPU is optimized for parallel pixel processing.

Scene graph: ordering, blending, and alpha

Sources are rendered in order from bottom to top. Alpha blending is used for overlays, lower thirds, and PNG graphics. If you use chroma key filters, OBS performs keying (often GPU accelerated) and composites the result. Too many expensive filters (blur, sharpen, heavy LUT stacks) can increase render time and cause missed frames.

Scaling: base canvas vs output resolution

OBS separates Base (Canvas) Resolution (your production layout) from Output (Scaled) Resolution (what you stream/record). Scaling happens during render/output, and the chosen scaler matters:

- Bilinear: fast, softer

- Bicubic: sharper, moderate cost

- Lanczos: sharpest, highest cost

For typical 1080p production streaming at 720p, Bicubic is a strong default. For radio/podcast visuals (album art + waveform), you can often lower output resolution and FPS to reduce load while keeping audio pristine.

Color formats and GPU readback

Internally, many sources render in RGB, but encoders generally prefer YUV formats (like NV12 or I420). Conversions can happen on the GPU; however, some paths require GPU readback (copying frames from GPU to CPU memory), which is expensive and can cause stutter on weaker systems.

Practical takeaway: keep your pipeline consistent—avoid mixing unusual color spaces, minimize “capture of capture” (e.g., capturing a preview window of another app), and use hardware encoders when possible to reduce CPU copies.

Base Canvas (e.g., 1920x1080 @ 60)

|

v

[GPU Composite in RGB/Linear]

|

+--> Filters (LUT, Key, Mask)

|

v

[Scale to Output (e.g., 1280x720)]

|

v

[Convert to YUV (NV12/I420) for Encoder]

|

v

Encoder + Muxer + NetworkPro Tip

If you see “render lag,” reduce GPU work first: lower FPS, simplify scenes, avoid heavy filters, and pick a faster scaler. Encoding tweaks won’t fix a GPU-bound compositor.

This is one reason professional broadcasters split responsibilities: OBS handles clean compositing, while delivery and scaling to large audiences is handled by a streaming platform built for it—like Shoutcast Net with SSL streaming, 99.9% uptime, and unlimited listeners.

Audio engine: sample rates, resampling, sync, monitoring, VSTs

For radio, podcast, and music streaming, audio is the product. OBS’s audio engine is designed to mix multiple live inputs (mic, desktop audio, capture card audio, network audio) while keeping everything aligned to the output timeline.

Sample rate fundamentals (44.1 kHz vs 48 kHz)

Most video workflows are 48 kHz. Many music/radio workflows originate at 44.1 kHz. If your devices disagree, OBS will resample—sometimes more than once—which can add CPU usage and small sync drift risks. For mixed video + audio streaming, set OBS to 48 kHz and ensure your audio interface and OS devices match.

Resampling and drift: why long shows expose problems

Two devices can both claim “48 kHz” yet run on slightly different clocks. Over time, that mismatch causes drift unless the engine continuously resamples. OBS does a solid job here, but you can help by consolidating audio into a single interface (or using digital sync/clocked devices in advanced setups).

Sync tools: per-source offsets and alignment strategy

Video sources (especially capture cards and IP sources) often add latency. If your mic is “ahead” of video, you can add an audio sync offset to delay the mic. Conversely, if video is ahead (rare), you may need to delay video using filters or add latency elsewhere. The goal is consistent lip-sync or beat alignment.

Monitoring: headphones without doubling or delay

OBS monitoring can route audio to your speakers/headphones, but it may introduce audible delay (monitoring latency). For DJs and musicians, prefer direct monitoring from the audio interface, and use OBS monitoring mainly for confidence checks.

VSTs and filters: broadcast polish

OBS supports VST plugins and built-in filters like noise suppression, noise gate, compressor, limiter, and EQ. A clean chain for spoken word often looks like:

Mic Input Filter Chain (typical):

1) Noise Suppression (light)

2) Noise Gate (gentle)

3) EQ (high-pass ~80-120 Hz)

4) Compressor (2:1 to 4:1)

5) Limiter (ceiling around -1 dB)Pro Tip

Avoid “fixing” bad gain staging with heavy compression. Set proper input levels first (peaks around -6 dB), then compress lightly, then limit. Your audience will hear the difference—especially on mobile speakers.

If your primary stream ever drops, a platform-side fallback matters for audio-first broadcasters. Shoutcast Net can keep your station online with AutoDJ, so listeners don’t hear dead air even if OBS or your ISP fails.

Encoding pipeline: x264 vs NVENC/AMF/QSV, CBR/VBR, keyframes

After OBS produces frames and a mixed audio stream, it must compress them into a deliverable format. Encoding is where quality, CPU/GPU load, and compatibility collide. For most live streaming, the output is H.264 video + AAC audio inside an FLV (for RTMP) or MPEG-TS (for SRT) style transport, depending on the protocol.

Software vs hardware encoders

x264 (software) runs on CPU and can produce excellent quality per bitrate, especially at slower presets—but it can overload CPUs during complex scenes. Hardware encoders offload work:

- NVENC (NVIDIA): strong quality, very stable, great for gaming and multi-source productions.

- QSV (Intel Quick Sync): efficient for many desktops/laptops, excellent for low-power rigs.

- AMF (AMD): improved greatly in recent generations; test your specific GPU/driver.

| Encoder | Primary Resource | Best Use | Tradeoff |

|---|---|---|---|

| x264 | CPU | Highest control, quality-tuning | Can cause encoding lag on busy scenes |

| NVENC | GPU (dedicated ASIC) | Live streaming + gaming + stability | Slightly less efficient than slow x264 at very low bitrates |

| QSV | iGPU media engine | Low-power, compact PCs | Quality varies by generation; avoid overloading iGPU with display tasks |

| AMF | GPU media engine | AMD-centric systems | Driver tuning matters; test before going live |

Rate control: why CBR still dominates live

CBR (Constant Bitrate) is common for live because it’s predictable for networks and ingest servers. VBR can improve quality but may spike above your available upload, increasing dropped frames. For radio/podcast-style video streams (static visuals), CBR at a modest bitrate is extremely stable.

Keyframes (GOP): seeking, recovery, and platform expectations

A keyframe interval (often 2 seconds) helps players join quickly and helps decoders recover from packet loss. Too long a GOP can increase join time and reduce resilience. Too short increases bitrate overhead. For many workflows:

- Keyframe interval: 2s (typical)

- Profile: High (if allowed), else Main

- B-frames: acceptable unless you need ultra-low latency

Audio encoding: keep it clean and compatible

AAC at 128–192 kbps is a common target. For music-first streams, 192 kbps AAC can be worth it if bandwidth allows. Keep sample rate consistent (usually 48 kHz in video workflows) to avoid extra resampling steps.

Pro Tip

If your show is mission-critical (church services, school events), choose a hardware encoder (NVENC/QSV/AMF) and leave CPU headroom for scene compositing, audio filters, and browser sources. Stability beats theoretical quality gains.

When budgeting, remember that delivery costs can dwarf encoding costs on some platforms. Unlike Wowza’s expensive per-hour/per-viewer billing, Shoutcast Net is built around a flat-rate unlimited approach (starting at $4/month) so you can scale without worrying about surprise invoices.

Protocols & delivery: RTMP/SRT, reconnect logic, latency tradeoffs

Protocols define how your encoded stream gets from OBS to a server. OBS commonly publishes via RTMP (ubiquitous, supported everywhere) or SRT (more resilient over unstable networks). Your choice affects reliability, firewall traversal, and latency.

RTMP: the workhorse ingest protocol

RTMP is widely supported and easy to configure: you provide a server URL and stream key. RTMP typically rides on TCP, which retransmits lost packets. That helps quality but can increase latency under packet loss (because retransmissions delay the stream).

SRT: resilience with tunable latency

SRT runs over UDP and adds its own reliability and encryption options. It can be more stable than RTMP over long-distance or jittery connections (cellular bonding, event venues), because it can adapt retransmission behavior within a target latency window.

Reconnect logic: what OBS does during network issues

OBS includes auto-reconnect options (retry delay, maximum retries). During an outage, OBS may keep encoding while attempting to re-establish the connection; depending on your settings, you may also want to stop/restart the stream to force a clean handshake. For stations, the big question is: what do listeners hear while you reconnect?

This is where a host-side safety net matters: with Shoutcast Net + AutoDJ, you can keep a continuous program feed (music rotation, prerecorded messages, station IDs) even when the live encoder is offline.

Latency tradeoffs: “very low latency 3 sec” vs stability

Low latency depends on the entire chain: encoder buffering, protocol buffering, server settings, and player behavior. Some workflows target very low latency 3 sec end-to-end, but that generally reduces tolerance for packet loss and CPU spikes. For interactive shows, you may accept slightly lower quality or slightly higher bitrate to keep latency down. For worship and broadcast radio, stability usually wins.

Platform reach: simulcasting and restreaming

Creators often want to Restream to Facebook, Twitch, YouTube while also serving their own site/app listeners. Architecturally, it’s safer to send one clean upstream to a primary server and then restream from there, rather than asking OBS to upload multiple streams (which multiplies bandwidth and failure modes).

Modern streaming stacks aim to handle any stream protocols to any stream protocols (RTMP, RTSP, WebRTC, SRT, etc), but you should still keep your OBS side simple: one protocol, one destination, stable bitrate, predictable keyframes.

Pro Tip

Set OBS to a bitrate your connection can sustain at 70% of your tested upload speed, not 95%. The remaining headroom absorbs Wi‑Fi contention, ISP jitter, and OS background traffic—dramatically reducing dropped frames.

Delivery economics matter too. Many legacy solutions and “enterprise” providers push complex, expensive billing models. Shoutcast Net emphasizes predictable costs, reliability, and broadcaster-friendly features instead of Wowza’s expensive per-hour/per-viewer billing.

Broadcast workflows: OBS + Shoutcast Net, AutoDJ backup, 24/7 uptime

OBS is your production switcher; Shoutcast Net is your broadcast delivery layer. For radio DJs, podcasters, churches, and schools, the goal is simple: stay online, sound great, and scale to all listeners with predictable costs.

Workflow A: Live shows with automatic fallback

A robust station pattern is:

- Primary: OBS (live mic + music + visuals if needed)

- Fallback: AutoDJ playlist rotation on the server

- Delivery: Shoutcast Net stream endpoint with SSL streaming and unlimited listeners

If OBS disconnects, the station still plays content. This solves the #1 problem in volunteer-driven environments (schools/churches) where a laptop might sleep, a USB cable gets bumped, or the internet drops.

Workflow B: Events and services with archiving

For worship services, graduations, and concerts:

- Stream live from OBS while recording locally (MKV recommended)

- Use conservative encoder settings to avoid mid-event overload

- Post-produce the MKV recording later for highlights

Workflow C: Multi-platform plus owned audience

If you want to Restream to Facebook, Twitch, YouTube and also serve listeners directly, build around the principle: own your primary feed. Shoutcast Net provides the foundation to reach your audience without being locked into one social platform’s algorithm changes or ingest quirks.

Why Shoutcast Net over legacy Shoutcast limits and Wowza pricing

Traditional deployments often run into scaling and cost issues: legacy Shoutcast limitations can constrain flexibility, and enterprise stacks may charge based on hours streamed or per-viewer usage. Wowza’s expensive per-hour/per-viewer billing can make budgeting painful for schools and churches.

Shoutcast Net is optimized for broadcasters who need predictable growth:

- $4/month starting price with a flat-rate unlimited model

- 7 days trial and a 7-day free trial option to test with your exact OBS workflow

- Unlimited listeners (build your audience without penalty)

- 99.9% uptime for dependable delivery

- SSL streaming for modern browsers and secure embeds

- AutoDJ for always-on fallback and scheduling

Practical setup checklist (OBS + host)

Use this checklist before you go live:

- Video: confirm base/output resolution, FPS, and scaler; test worst-case scene

- Audio: lock all devices to the same sample rate; verify mic chain; test monitoring

- Encoder: pick hardware encoder when possible; set keyframes to 2s; choose CBR for stability

- Network: use wired Ethernet; cap bitrate below sustainable upload; enable OBS auto-reconnect

- Continuity: configure AutoDJ so your station never goes silent

Operational Pattern (recommended)

--------------------------------

1) OBS produces program (A/V)

2) One upstream publish to your stream host

3) Host delivers to listeners at scale (SSL, unlimited)

4) AutoDJ runs as safety net when live is offline

5) Optional: restream/simulcast from host-side toolsPro Tip

For 24/7 stations, treat OBS like a “show device,” not the station itself. The station lives on the server. Use Shoutcast hosting + AutoDJ so you can swap laptops, update OBS, or reboot without losing listeners.

When you’re ready to build a reliable broadcast pipeline that can stream from any device to any device, start with a plan that fits your budget and audience growth. Explore plans in the shop, compare streaming options (including icecast), and activate your 7 days trial to test OBS end-to-end before your next big show.